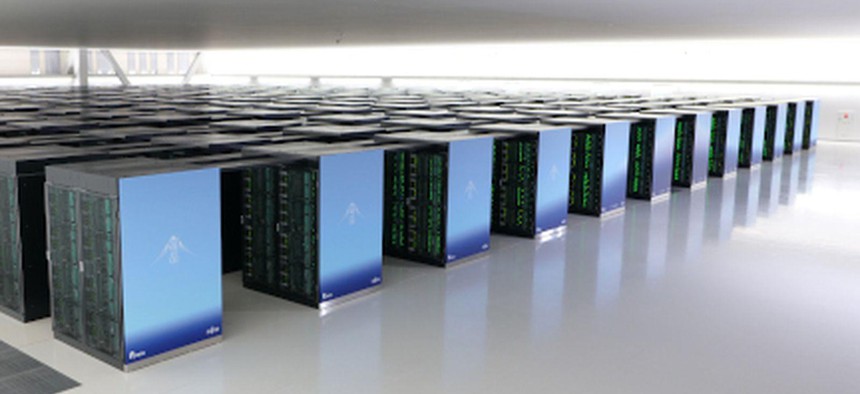

The Fugaku supercomputer, the world's fastest, at Riken Center for Computational Science, Kobe, Japan. Riken Center for Computational Science, Kobe, Japan

Inside the Volunteer Supercomputer Team That's Hunting for COVID Clues

The White House's team recently added the world's fastest computer to its informal network of more than 40.

The world’s fastest supercomputer teamed up with the White House’s expanding supercomputing effort to fight the novel coronavirus.

Japan’s Fugaku—which surpassed leading U.S. machines on the Top 500 list of global supercomputers in late June—joined the COVID-19 High Performance Computing Consortium.

Jointly launched by the Energy Department, Office of Science and Technology Policy and IBM in late March, the consortium currently facilitates more than 65 active research projects and envelops a vast supercomputer-powered search for new findings pertaining to the novel coronavirus’ spread, how to effectively treat and mitigate it, and more. Dozens of national and international members are volunteering free compute time through the effort, providing at least 485 petaflops of capacity and steadily growing, to more rapidly generate new solutions against COVID-19.

“What started as a simple concept has grown to span three continents with over 40 supercomputer providers,” Dario Gil, director of IBM Research and consortium co-chair, told Nextgov last week. “In the face of a global pandemic like COVID-19, hopefully a once-in-a-lifetime event, the speed at which researchers can drive discovery is a critical factor in the search for a cure and it is essential that we combine forces.”

Related: White House Launches Supercomputing Consortium to Fight the Coronavirus

Related: Oak Ridge National Lab Taps Supercomputers to Fight Coronavirus

Gil and other members and researchers briefed Nextgov on how the work is unfolding, how they’re measuring success and the research the consortium is increasingly underpinning.

The Consortium’s Evolution

Energy’s Office of Science Director Chris Fall told Nextgov last week that since the consortium’s founding, its resources have been used to sort through billions of molecules to identify promising compounds that can be manufactured quickly and tested for potency to target the novel coronavirus, produce large data sets to study variations in patient responses, perform airflow simulations on a new device that will allow doctors to use one ventilator to support multiple patients—and more. The complex systems are powering calculations, simulations and results in a matter of days that several scientists have noted would take a matter of months on traditional computers.

“From a small conversation three months ago, we overcame a myriad of institutional and organizational boundaries to establish the consortium,” Fall said, adding that the effort is “building an international team of COVID-19 researchers that are sharing their best ideas, methods and results to understand the virus and its effects on humans which will [allow] the world to ultimately conquer or confine the virus.”

In a recent interview, Energy’s Undersecretary for Science Paul Dabbar explained that any researcher interested in tapping into advanced computing capabilities can submit relevant research proposals to the consortium through an online portal that’ll then be reviewed for selection. An executive committee supports the group’s organization and helps steer policies, while a science committee is tasked with evaluating research proposals submitted to the consortium for potential impact upon selection. And a third committee allocates the time and cycles on the supercomputing machines once they’re chosen.

“What's really interesting about this from an organizational point of view is that it's basically a volunteer organization,” Dabbar noted.

As of July 1, the consortium had received more than 148 COVID-19 research proposals with 78 approved and 68 up and running via the involved supercomputing resources, Energy confirmed. Though researchers are tapping into the assets free of charge, the work doesn’t come without cost. Dabbar said the consortium taps into some of the department’s user facilities and resources that were built and funded by taxpayer dollars. The effort induces operating costs such as runtime, electricity and cooling for the machines, which Dabbar said are “relatively minor compared to actually building the capacity to begin with.”

“It does absolutely cost money,” Dabbar said. “But at the end of the day, a lot of this is taking advantage of what the American people invested in, and using the flexibility, and shifting it towards this problem.”

The combined, supercomputing resources are speeding up the chase for answers and solutions against COVID-19, but that faster pace isn’t the only metric for success. IBM’s Gil said in the early days, “the establishment of the consortium and the efficiency we have achieved in expedited expert review of proposals and rapid matching of approved proposals to supercomputing systems, along with rapid on-boarding onto those systems would have to be considered our first major success.”

Those involved also measure success by the number of up-and-running research projects, and highlighted that 27 projects already have experimental, clinical or policy transition plans in place. Insiders also consider the fact that they were able to quickly bring together industry players, as Gil noted “many of whom are competitors,” labs, federal agencies, universities and several international partners to share their systems to be “a major achievement.”

NASA is one consortium member that’s been involved in the initiative from the very beginning when it was invited by OSTP, Piyush Mehrotra, chief of NASA’s Advanced Supercomputing, or NAS Division told Nextgov Thursday.

The division, at Ames Research Center in Silicon Valley, hosts the space agency’s supercomputers, which Mehrotra noted are typically used for large-scale simulations supporting NASA’s aerospace, earth science, space science and space exploration missions. But, a selection of the agency’s supercomputing assets are also reserved for national priorities that transcend beyond the agency’s scope.

“In order to understand COVID-19, and to develop treatments and vaccines, extensive research is required in complex areas such as epidemiology and molecular modeling—research that can be significantly accelerated by supercomputing resources,” Mehrotra explained. “We are therefore making the full reserve portion of NASA supercomputing resources available to researchers working on the COVID-19 response, along with providing our expertise and support to port and run their applications on NASA systems.”

Amazon Web Services is another that joined among the consortium’s first wave of members and participated in the initial roundtable discussion at the White House where the concept emerged in March. The company’s Vice President of Public Policy Shannon Kellogg told Nextgov in late May that, in joining, AWS “saw a clear opportunity to bring the benefits of cloud … to bear in the race for treatments and a vaccine.” The company has since provided cloud computing resources to more than a dozen of the consortium’s active projects, and according to Kellogg, provides “in-kind credits to the research teams, which provide them with cloud computing resources.” The tech-giant’s team then communicates regularly with the researchers to help address technical questions.

“This effort has shown how collaboration and commitment from leaders across government, business, and academia can empower researchers and accelerate the pace of their work,” Kellogg said.

Outside of IBM, NASA and AWS, other early members of the consortium include Google Cloud, Microsoft, the Massachusetts Institute of Technology, Rensselaer Polytechnic Institute, the National Science Foundation, as well as Argonne, Lawrence Livermore, Los Alamos, Oak Ridge and Sandia National laboratories. And as the consortium progresses, it’s also expanding along the way. In April, the National Center for Atmospheric Research’s Wyoming Supercomputing Center, chipmaker AMD and graphics processing units-maker NVIDIA joined, among others.

Dell Technologies also began the process to participate in April, according to Janet Morss, senior consultant, high performance computing. It took about a month for the involvement to come into fruition and the company is now donating cycles from the Zenith supercomputer and other resources.