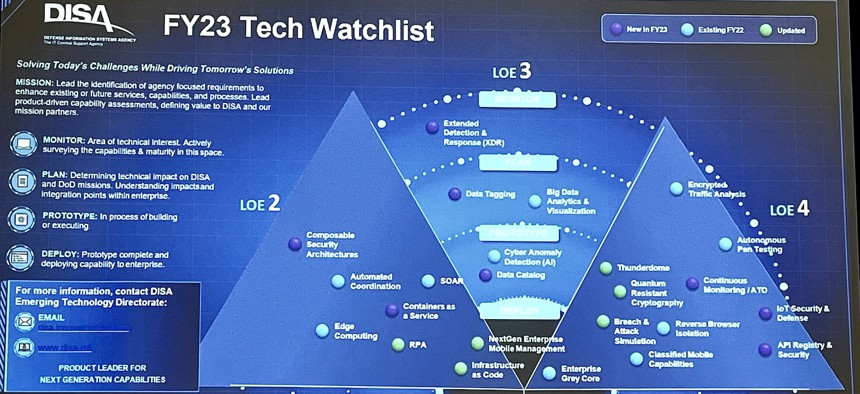

A slide depicting DISA's tech priorities for the near future, as seen at AFCEA's TechNetCyber conference in Baltimore, May 2, 2023 Patrick Tucker / DEFENSE ONE

How DOD Is Experimenting with AI for Enhanced Cybersecurity

Automated penetration testing and threat hunting could enable much faster response to threats. But adversaries are also accelerating their capabilities.

BALTIMORE—The Defense Information Systems Agency is looking to expand the way it uses artificial intelligence to detect signs of intrusion on DOD networks much faster and sooner.

Deepak Seth, the technical lead for emerging technologies at DISA, told conferencegoers at AFCEA’s TechnetCyber conference that the agency wants to take all the data it can collect within DOD networks at different endpoints and have “an AI model, help… predict or process all that and then give us some information that it will take a human a lot longer.” He said the agency is working with DARPA on the Cyber Hunting at Scale, or CHASE, program.

“The question really becomes, ‘How can we use AI to process all this data?’ and then we'll be able to detect threats that we address that we don't know about,” he said.

But DISA isn’t just looking to automate the detection of anomalies across all of its computers and devices. It’s also looking to automate attacks…on itself. One of the key capabilities DISA wants to develop is automated penetration testing on Defense Department networks.

“We’re trying to automate a lot of the functions that we would typically see a team of pen testers would do for us within the agency. Those resources are becoming more and more limited, if you will,” said Eric Mellot, DISA’s senior technical strategist. “We're looking to figure out ways in which we can leverage technology to do autonomous continuous validation…being able to bring in artificial intelligence to be able to think like a hacker.”.

That follows previous Pentagon experimentation that showed red teams continually trying to hack Defense Department networks improved overall cybersecurity better and faster than just periodically running check lists on Defense Department systems.

Recent innovations in commercial AI, such as the wildly popular ChatGPT platform from Open AI, illustrate the pace at which the technology is advancing and reinforce the need for the Defense Department to more quickly. Those innovations could also make adoption easier for the Pentagon, as generative large language models reduce the skill level needed to experiment, Seth said. “It sort of reminds me of, you know, of early days of the internet, I mean, never has such an advanced technology had been so easily available. And, you know, the way I look at it is, how can we take this low-code, no-code approach to really bring down the barriers to adopt AI at scale.”

Still, DISA is worried that adversarial use of AI by countries like China, combined with new technologies like quantum computing, could outpace U.S. efforts. One of DISA’s key future research efforts is new forms of encryption that can withstand the power of AI models running on advanced (though not yet real) quantum computers.

“The concern that we have is…when a quantum computer is able to break RSA [public key encryption protocols,] all the encryption that we rely on…all that could be impacted and the problem is that it will really render insecure RSA and then all the web protocols that use RSA,” Seth said. DISA is working with NIST, which last year released a series of new algorithms that would help users secure their data in an environment where traditional cryptographic measures no longer work.

DISA wants to refine and build on those for Defense Department use, Seth said. “Initially, we're focusing on asymmetric cryptography [or public key infrastructure]. But we're also beginning to look at, in addition to this…How do we better secure our optical backbone transport network? How can we safely distribute keys to some of the devices, but distribute these keys in a secure manner? So we're just beginning to look at what the impact is.”